Autonomous driving sensors – an overview of advancements

Sensors such as radar, lidar, and cameras play a crucial role in collecting data about the environment of autonomous vehicles, enabling them to make informed decisions. The integration of different sensors enhances environmental understanding, while complex algorithms allow for object recognition and prediction of actions. The advancement of sensor and perception systems holds the promise of safer and more reliable autonomous driving experiences in the future.

Autonomous driving sensors are a crucial component of autonomous vehicles as they enable the vehicle to gather data about its surroundings and make informed decisions.

Here is an overview of the latest sensors used in autonomous vehicles.

Autonomous driving sensors, Source: Vsora

Types of Autonomous driving sensors

Lidar (Light Detection and Ranging)

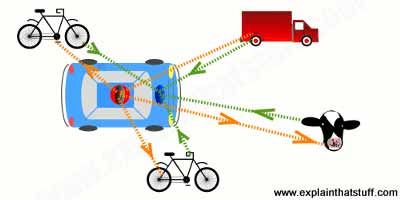

Lidar utilizes laser beams to measure the distance to objects and create a three-dimensional map of the vehicle’s surroundings. Lidar can accurately identify and locate objects such as other vehicles, pedestrians, cyclists, and obstacles. Advanced lidars can provide high resolution, a wide scanning angle, and perform well in various weather conditions.

How lidar work? Source: https://www.explainthatstuff.com/lidar.html

Radar (Radio Detection and Ranging)

Radar utilizes electromagnetic waves to detect objects in the vehicle’s surroundings. It can measure the distance, speed, and direction of objects and can detect them even in challenging weather conditions such as rain or fog. Radar is particularly useful for detecting vehicles that are outside the field of view of cameras and lidars.

Cameras

Cameras play a crucial role in the perception of the vehicle’s surroundings. They can capture videos and images, providing high-quality visual information. Advanced cameras employ computer vision techniques to identify objects, read traffic signs, and recognize traffic lights. Cameras are particularly useful for color recognition, traffic sign identification, and pedestrian detection.

Ultrasonic Sensors

Ultrasonic sensors use high-frequency sound waves to measure the distance to objects. They are useful for detecting objects in close proximity to the vehicle, such as parked cars or obstacles during maneuvering.

Inertial Sensors

Inertial sensors, such as accelerometers and gyroscopes, measure the acceleration and angular velocity of the vehicle.

They are crucial for tracking the vehicle’s motion and providing information about its orientation.

Inertial Sensors, Source: Semanticscholar.org

Sensors and Perception

The Crucial Role of Sensors in Autonomous Vehicles

Sensors play a vital role in the functioning of autonomous vehicles. They serve as the eyes and ears of these vehicles, allowing them to perceive and understand their surroundings.

Without sensors, autonomous vehicles would lack the ability to gather real-time data about their environment, making it impossible for them to navigate safely and make informed decisions.

Sensors such as radar, lidar, cameras, ultrasonic sensors, and inertial sensors work together to create a comprehensive perception system. They collect valuable information about the vehicle’s surroundings, including the presence of objects, their distance, speed, and direction. This data is processed using advanced algorithms to enable the vehicle to identify objects, predict their trajectories, and make accurate driving decisions. The integration of sensors is essential for the reliable and safe operation of autonomous vehicles.

Integration for Enhanced Understanding: The Power of Sensor Combination

The combination of different sensors in autonomous vehicles leads to enhanced understanding of the environment. By integrating sensors such as lidar, radar, and cameras, the vehicle can obtain a more comprehensive and detailed view of its surroundings. Each sensor brings its unique capabilities to the system. Lidar provides high-resolution three-dimensional mapping, radar offers robust object detection even in challenging weather conditions, and cameras provide visual information for object recognition. By fusing the data from these sensors, the vehicle can overcome individual limitations and achieve a more accurate perception of its environment.

Sensor integration also enables data filtering and verification, reducing the likelihood of misinterpretations or false positives. The power of sensor combination lies in its ability to provide a more complete and reliable understanding of the environment, ultimately contributing to safer autonomous driving experiences.

Perception: Unveiling the Environment Through Complex Algorithms

Perception in autonomous vehicles involves the use of complex algorithms to interpret the data collected by sensors. These algorithms analyze sensor data to identify and classify objects such as vehicles, pedestrians, cyclists, traffic signs, and obstacles.

By assessing the trajectories and behaviors of these objects, the system can predict their future actions and make informed driving decisions. Machine learning and deep learning algorithms play a crucial role in object recognition and understanding. Through extensive training on labeled data, these algorithms can distinguish between different objects and accurately classify them. This capability allows the vehicle to respond to changes in the environment and make safe and timely decisions.

Perception algorithms are continuously updated and refined to adapt to different scenarios and improve accuracy. They are an essential component of autonomous driving systems, enabling vehicles to navigate their surroundings with confidence.

Unwavering Performance: Sensors in Various Conditions and Situations

Autonomous vehicles operate in diverse conditions and situations, including adverse weather conditions like rain, fog, or snow. The performance of sensors under these challenging circumstances is crucial for maintaining a high level of safety and reliability.

Sensor manufacturers have made significant advancements to ensure the robustness of their devices in adverse weather conditions. Lidar systems, for example, have improved capabilities to penetrate rain and fog, enabling accurate distance measurements. Radar sensors have been optimized to detect objects even in low-visibility scenarios. Cameras have undergone advancements in low-light imaging and image processing techniques, allowing them to capture clear and usable images even at night.

Moreover, sensor redundancy is essential for unwavering performance. By integrating multiple sensors of the same or different types, the system can cross-validate data and mitigate the impact of sensor failures or limitations. Sensor technology continues to evolve, ensuring the reliability and performance of autonomous vehicles under various conditions.

Advancing Technology: The Future of Sensor and Perception Systems

The field of sensor and perception technology for autonomous vehicles is continuously advancing, with exciting possibilities on the horizon. Researchers and engineers are actively exploring new sensor technologies and enhancing existing ones to improve the

reliability, accuracy, and speed of data collection. One area of focus is the development of next-generation lidar sensors, aiming for higher resolution, wider scanning angles, and longer detection ranges. These advancements will enable a more detailed and comprehensive perception of the environment. Efforts are also underway to reduce the size and cost of lidar sensors, making them more accessible for widespread adoption.

Additionally, radar and camera technologies are being further enhanced. Advanced radar systems are being developed to provide more precise measurements and improved object detection capabilities. Cameras are benefiting from advancements in image processing algorithms, enabling faster and more accurate object recognition.

Integration is another key aspect of future sensor and perception systems. The seamless fusion of data from multiple sensors will continue to be a focus, allowing for a more holistic understanding of the environment and improved decision-making.

Machine learning algorithms will also continue to evolve, enabling more advanced object recognition, prediction, and decision-making capabilities. The integration of artificial intelligence techniques will enhance the adaptive nature of autonomous vehicles, allowing them to learn and improve their performance over time.

The future of sensor and perception systems in autonomous vehicles holds great promise. Ongoing research and development efforts are paving the way for even safer, more efficient, and more reliable autonomous driving experiences. As technology advances, sensors and perception systems will continue to play a pivotal role in shaping the future of transportation, revolutionizing the way we travel and interact with our vehicles.

Autonomous driving – related posts

AI in Cars: Artificial Intelligence and Machine Learning

Discover how AI and machine learning transform cars with advanced sensors, intelligent decision-making, and enhanced safety, driving the future of…

Autonomous vehicles NEWS

Tesla’s Full Self-Driving System Under Investigation After Fatal Crash, October 18, 2024. The U.S. National Highway Traffic Safety Administration (NHTSA)…

Autonomous driving

Autonomous driving represents one of the most exciting technological innovations in the automotive and transportation industries. This revolutionary technology enables…